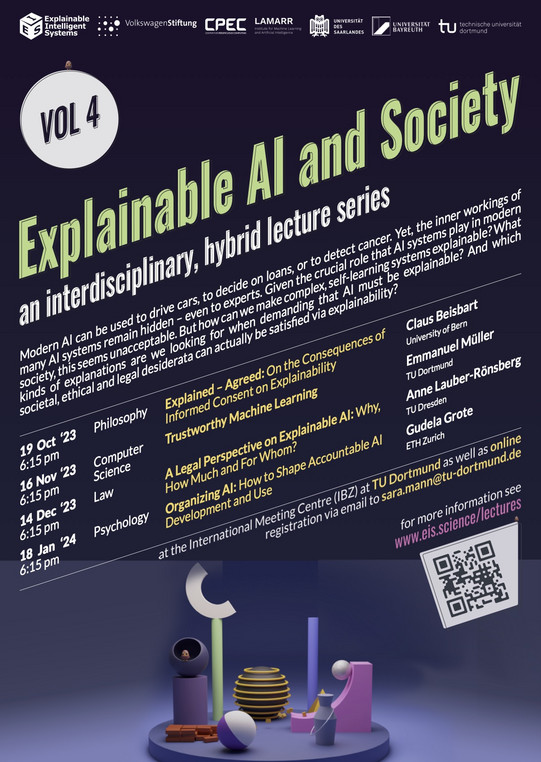

Interdisziplinäre Vortragsreihe "Explainable AI and Society" im WiSe 23/24

Important update: Due to the rail strike, the talk by Daniel Neider can be attended online only via Zoom.

The 4th installment of the lecture series "Explainable AI and Society" will take place during the winter semester 2023/2024, both online and in person at the IBZ, TU Dortmund.

Modern AI can be used to drive cars, to decide on loans, or to detect cancer. Yet, the inner workings of many AI systems remain hidden – even to experts. Given the crucial role that AI systems play in modern society, this seems unacceptable. But how can we make complex, self-learning systems explainable? What kinds of explanations are we looking for when demanding that AI must be explainable? And which societal, ethical and legal desiderata can actually be satisfied via explainability?

The interdisciplinary, hybrid lecture series presents the latest research on these and related topics and invites exchange with researchers, students, and the interested public.

Lecture Dates

- 19.10.23, 6.15 p.m. (CEST): Claus Beisbart, University of Bern (philosophy):

"Explained – Agreed. On the Consequences of Informed Consent on Explainability"

- 16.11.23, 6.15 p.m. (CET): [update -- online only] Daniel Neider, TU Dortmund (computer science):

"Verification of Neural Networks --- And What It Might Have to Do With Explainability"

- 14.12.23, 6.15 p.m. (CET): Anne Lauber-Rönsberg, TU Dresden (law):

"A Legal Perspective on Explainable AI: Why, How Much and For Whom?"

- 18.01.24, 6.15 p.m. (CET): Gudela Grote, ETH Zurich (psychology):

"Organizing AI: How to Shape Accountable AI Development and Use"

Registration

To register, send an e-mail with the title "Registration" to sara.manntu-dortmundde. Include which lecture(s) you would like to attend and whether you will attend online or in person.

For further information visit www.eis.science/lectures

The lecture series is organized by the research project "Explainable Intelligent Systems", funded by the Volkswagen Foundation.